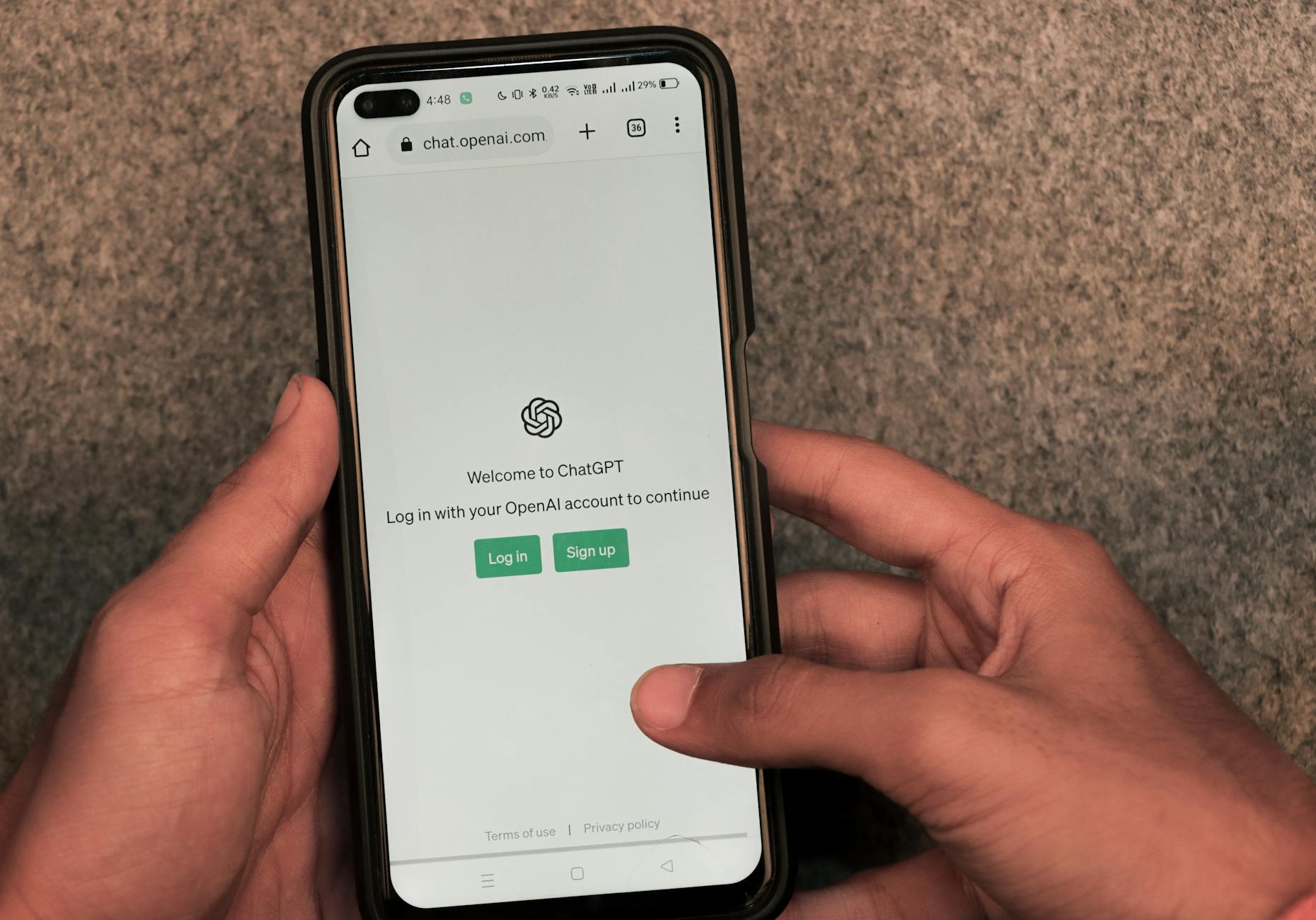

Despite its advances, GPT-5, released by OpenAI in August 2025, still hallucinates on about 1 to 3% of common tasks, per various benchmarks – far less than predecessors, but still a risk for critical uses like medicine or historical facts.

For instance, on HealthBench, the hallucination rate drops to 1.6% with “thinking” mode on, versus 3.6% without.

On LongFact-Concepts, it’s just 1.0%, a huge improvement over o3’s 5.2%.

This means on average, out of ten factual tasks, GPT-5 might err once, but tests show a significant reduction: 26% less than GPT-4o overall.

OpenAI emphasizes hallucination reduction for more reliable answers, but devs report ongoing “headaches” in production, like sporadic inventions in WhatsApp agents.